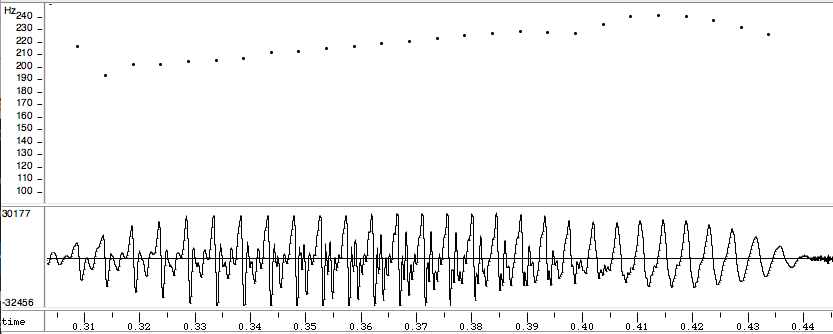

Therefore, a contour that follows the sequence of low, middle, high, would be labeled as contour class Often used in the analysis of post-tonal music, Michael Friedmann's methodology for analyzing pitch contour assigns numeric values to notate where each pitch falls in relation to the others within a musical line the lowest pitch is assigned "0" and the highest pitch is assigned the value of n-1, in which n= the number of pitches within the segmentation. The same contour can be transposed without losing its essential relative qualities, such as sudden changes in pitch or a pitch that rises or falls over time. In music, the pitch contour focuses on the relative change in pitch over time of a primary sequence of played notes. Unnatural pitch contours result in synthesis that sounds "lifeless" or "emotionless" to human listeners, a feature that has become a stereotype of speech synthesis in popular culture. One of the primary challenges in speech synthesis technology, particularly for Western languages, is to create a natural-sounding pitch contour for the utterance as a whole. It also indicates intonation in pitch accent languages. It is fundamental to the linguistic concept of tone, where the pitch or change in pitch of a speech unit over time affects the semantic meaning of a sound. Pitch contour may include multiple sounds utilizing many pitches, and can relate the frequency function at one point in time to the frequency function at a later point. Specify all the classifier options and train the classifier.In linguistics, speech synthesis, and music, the pitch contour of a sound is a function or curve that tracks the perceived pitch of the sound over time. crossval (Statistics and Machine Learning Toolbox) and kfoldLoss (Statistics and Machine Learning Toolbox) are used to compute the cross-validation accuracy for the KNN classifier. Train the classifier and print the cross-validation accuracy. For more information about the classifier, refer to fitcknn (Statistics and Machine Learning Toolbox). In this example, the number of neighbors is set to 5 and the metric for distance chosen is squared-inverse weighted Euclidean distance. The hyperparameters are selected to optimize validation accuracy and performance on the test set. The hyperparameters for the nearest neighbor classifier include the number of nearest neighbors, the distance metric used to compute distance to the neighbors, and the weight of the distance metric. KNN is a classification technique naturally suited for multiclass classification. In this example, you use a K-nearest neighbor (KNN) classifier. Now that you have collected features for all 10 speakers, you can train a classifier based on them. In this example, the label identifies the speaker.įeatures = (features-M)./S Training a Classifier The countEachLabel method of audioDatastore is used to count the number of audio files per label. 80% of the data for each label is used for training, and the remaining 20% is used for testing. In this example, the datastore is split into two parts. The resulting datastores have the specified proportion of the audio files from each label. The splitEachLabel function of audioDatastore splits the datastore into two or more datastores. 'C:\Users\jblock\AppData\Local\Temp\commonvoice\train\clips' \AppData\Local\Temp\commonvoice\train\clips\common_voice_en_116643.wav' \AppData\Local\Temp\commonvoice\train\clips\common_voice_en_116631.wav' \AppData\Local\Temp\commonvoice\train\clips\common_voice_en_116626.wav' The consonant /T/ (unvoiced speech) looks like noise, while the vowel /UW/ (voiced speech) is characterized by a strong fundamental frequency. Characterizing the source is an important part of characterizing the speech system.Īs an example of voiced and unvoiced speech, consider a time-domain representation of the word "two" (/T UW/). In the source-filter model of speech, the excitation is referred to as the source, and the vocal tract is referred to as the filter. In the case of unvoiced speech, air from the lungs passes through a constriction in the vocal tract and becomes a turbulent, noise-like excitation.

The resulting sound is dominated by a relatively low-frequency oscillation, referred to as pitch. In the case of voiced speech, air from the lungs is modulated by vocal cords and results in a quasi-periodic excitation. Speech can be broadly categorized as voiced and unvoiced.

Zero-crossing rate and short-time energy are used to determine when the pitch feature is used. Pitch and MFCC are the two features that are used to classify speakers. This section discusses pitch, zero-crossing rate, short-time energy, and MFCC. The trained KNN classifier predicts which one of the 10 speakers is the closest match. Then, new speech signals that need to be classified go through the same feature extraction. These features are used to train a K-nearest neighbor (KNN) classifier. Pitch and MFCC are extracted from speech signals recorded for 10 speakers.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed